This is one of my last posts about repurposing Bing Webmaster Tools’ AI citation data from “that’s interesting, but I don’t know what to do with it” into useful insights.

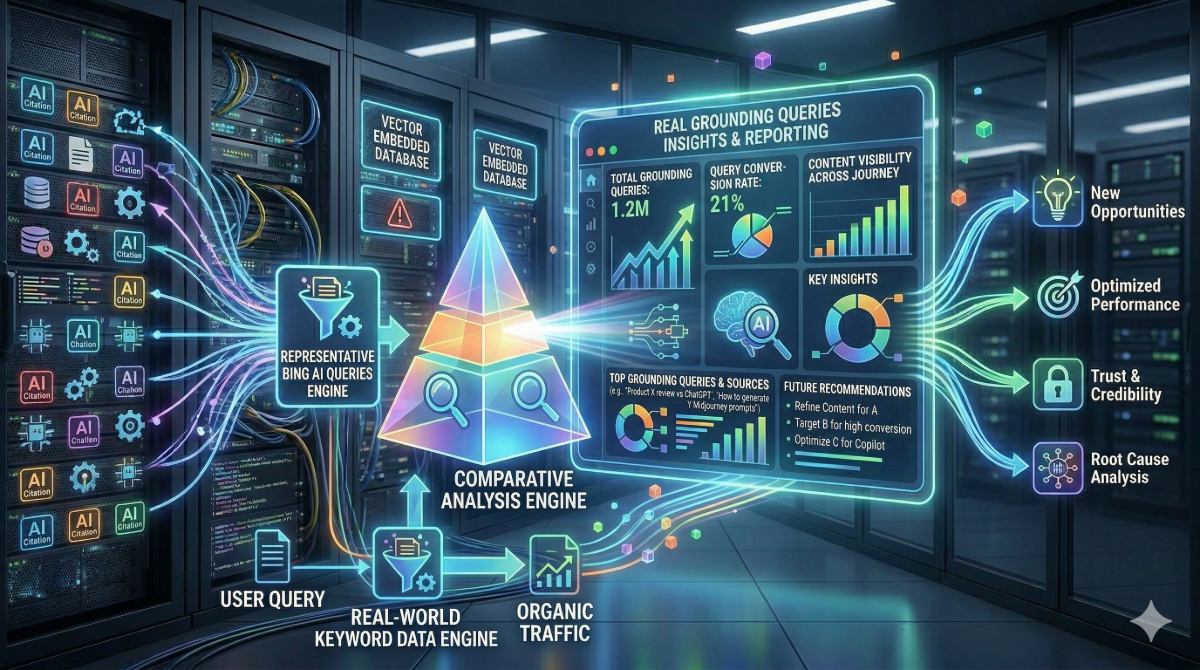

In this post, you’ll learn how to transform the representative grounding queries into a list of probable fan out queries by vectorizing your real-world keyword data and comparing the grounding queries to them.

Prior posts:

- How to Mass Export Daily Page-Level Citation Data from the AI Performance Report in Bing Webmaster Tools

- How to Join Your Bing Webmaster Tools AI Citation Data to Your Google Analytics Data

Tools and data you will need

- Google Colab (or Python)

- Google Drive

- Your Bing Webmaster Tools grounding queries

- A set of real-world queries you can compare against. For this example and for the sake of consistency, I will use queries from Bing Webmaster Tools, but you can use queries from Google Search Console, or both.

Context about grounding queries

If you weren’t aware, the “grounding queries” that BWT shows aren’t real queries. They’re representative queries of query fan out queries.

“Each row in the grounding queries tab in Bing Webmaster Tools displays a short phrase. These phrases are grouped representations from your site that were retrieved and cited in AI-generated answers. They are not full user questions or prompts.”

“Grounding Queries Your Content Appears In: Shows the key phrases the AI used when retrieving content that was cited in its answer.”

While it is one-step removed, we have quasi-real-world data that we can use in other contexts, like identifying the likely text that Copilot grounds its answer in.

What this will do

I will be creating a very simple vector embedding database in ChromaDB that will be locally hosted on Google Drive. It will vectorize the global list of queries into the database from a CSV using Hugging Face (all-MiniLM-L6-v2), then will compare the grounding queries against that database and it will identify the closest related queries.

Setup

Files

Export a global list of queries from your real-world source of choice – either Bing Webmaster Tools, Google Search Console, or both – and export only the queries with a heading of Keyword into a CSV. For this example, I am using only Bing Webmaster Tools keyword data, just to be platform-consistent. Name the export global_keywords.csv.

Export your full list of grounding queries from Bing Webmaster Tools AI Performance report. Name the CSV grounded_queries.csv. Make sure the heading on the queries is Grounding Query.

Google Colab, Mounting the Drive, and Uploading the Files

Create a new notebook in Google Colab and install your necessary components.

!pip install -q chromadbNext, mount your drive.

from google.colab import drive

import os

# 1. Mount Google Drive

drive.mount('/content/drive')

# 2. Define your local path (this folder will appear on your desktop via Google Drive sync)

DB_PATH = "/content/drive/My Drive/chroma_keywords_db"

os.makedirs(DB_PATH, exist_ok=True)This will create a folder called chroma_keywords_db. Upload your CSVs to that folder.

Creating a Vector Embedding Database

Next, create your database of vectorized queries from the global dataset by copying and pasting the script below. This will load the CSV, create a Chroma database, and then use the model all-MiniLM-L6-v2 to create the embeddings. It took me about 30 seconds to embed roughly 4000 queries.

import pandas as pd

import chromadb

from chromadb.utils import embedding_functions

import os

# 1. Path to your CSV file on Google Drive

# Change 'global_keywords.csv' to your actual filename

CSV_FILE_PATH = "/content/drive/My Drive/chroma_keywords_db/global_keywords.csv"

# 2. Load the CSV into a Dataframe

# Assuming your keywords are in a column named 'Keyword'

df_global = pd.read_csv(CSV_FILE_PATH)

global_list = df_global['Keyword'].astype(str).tolist()

# 3. Initialize Chroma Persistent Client

client = chromadb.PersistentClient(path=DB_PATH)

# 4. Use model optimized for short keywords

model_name = "all-MiniLM-L6-v2"

ef = embedding_functions.SentenceTransformerEmbeddingFunction(model_name=model_name)

# 5. Create/Get collection

global_collection = client.get_or_create_collection(

name="global_site_keywords",

embedding_function=ef

)

# 6. Add keywords to the collection

# We use the list index as a simple unique ID

global_collection.add(

documents=global_list,

ids=[f"global_{i}" for i in range(len(global_list))]

)

print(f"Successfully loaded {len(global_list)} keywords from CSV into Chroma.")Predicting real-world grounding queries

Finally, copy, paste, and run the script below to load your representative grounding queries, then compare them to the vectorized database. By default, it will provide a list of the 10 closest keywords for each grounding query in a new CSV. If you want to adjust the number of matches, change the number in n_results.

import pandas as pd

import os

# 1. Load the Grounded Queries

GROUNDED_QUERIES_CSV = "/content/drive/My Drive/chroma_keywords_db/grounded_queries.csv"

df_grounded = pd.read_csv(GROUNDED_QUERIES_CSV)

grounded_queries_list = df_grounded['Grounding Query'].astype(str).tolist()

# 2. Query for the Top 10 matches

results = global_collection.query(

query_texts=grounded_queries_list,

n_results=10

)

# 3. Flatten the results for a clean CSV

# Since 'results' returns lists of lists, we loop through and unpack them

comparison_output = []

for i, query in enumerate(grounded_queries_list):

# Get the 10 docs and 10 distances for this specific query

top_docs = results['documents'][i]

top_distances = results['distances'][i]

for rank in range(len(top_docs)):

comparison_output.append({

"Bing/Copilot Query": query,

"Rank": rank + 1,

"Matched Global Keyword": top_docs[rank],

"Similarity Distance": round(top_distances[rank], 4)

})

# 4. Export to CSV

df_final = pd.DataFrame(comparison_output)

EXPORT_OUTPUT_PATH = "/content/drive/My Drive/chroma_keywords_db/top_10_keyword_matches.csv"

df_final.to_csv(EXPORT_OUTPUT_PATH, index=False)

print(f"Done! Created a list of {len(df_final)} potential matches.")Concluding thoughts

This is just a simple starting point. For this tutorial, I selected the easiest vector database I could find (ChromaDB) and harnessed a readily available model (all-MiniLM-L6-v2). You can improve upon this in many ways.

The full script

The script above was broken up for ease of access. If you already have a Google Drive folder location created, you can replace the ad hoc one that is created during the mounting process and upload the CSVs yourself, then run the full script in one go.

# Imports

import pandas as pd

import os

import chromadb

from chromadb.utils import embedding_functions

from google.colab import drive

# 1. Mount Google Drive

drive.mount('/content/drive')

# 2. Define your local path (this folder will appear on your desktop via Google Drive sync)

DB_PATH = "/content/drive/My Drive/chroma_keywords_db"

os.makedirs(DB_PATH, exist_ok=True)

# 3. Path to your CSV file on Google Drive

# Change 'global_keywords.csv' to your actual filename

CSV_FILE_PATH = "/content/drive/My Drive/chroma_keywords_db/global_keywords.csv"

# 4. Load the CSV into a Dataframe

# Assuming your keywords are in a column named 'Keyword'

df_global = pd.read_csv(CSV_FILE_PATH)

global_list = df_global['Keyword'].astype(str).tolist()

# 5. Initialize Chroma Persistent Client

client = chromadb.PersistentClient(path=DB_PATH)

# 6. Use model optimized for short keywords

model_name = "all-MiniLM-L6-v2"

ef = embedding_functions.SentenceTransformerEmbeddingFunction(model_name=model_name)

# 7. Create/Get collection

global_collection = client.get_or_create_collection(

name="global_site_keywords",

embedding_function=ef

)

# 8. Add keywords to the collection

# We use the list index as a simple unique ID

global_collection.add(

documents=global_list,

ids=[f"global_{i}" for i in range(len(global_list))]

)

print(f"Successfully loaded {len(global_list)} keywords from CSV into Chroma.")

# 9. Load the Grounded Queries

GROUNDED_QUERIES_CSV = "/content/drive/My Drive/chroma_keywords_db/grounded_queries.csv"

df_grounded = pd.read_csv(GROUNDED_QUERIES_CSV)

grounded_queries_list = df_grounded['Grounding Query'].astype(str).tolist()

# 10. Query for the Top 10 matches

results = global_collection.query(

query_texts=grounded_queries_list,

n_results=10

)

# 11. Flatten the results for a clean CSV

# Since 'results' returns lists of lists, we loop through and unpack them

comparison_output = []

for i, query in enumerate(grounded_queries_list):

# Get the 10 docs and 10 distances for this specific query

top_docs = results['documents'][i]

top_distances = results['distances'][i]

for rank in range(len(top_docs)):

comparison_output.append({

"Bing/Copilot Query": query,

"Rank": rank + 1,

"Matched Global Keyword": top_docs[rank],

"Similarity Distance": round(top_distances[rank], 4)

})

# 12. Export to CSV

df_final = pd.DataFrame(comparison_output)

EXPORT_OUTPUT_PATH = "/content/drive/My Drive/chroma_keywords_db/top_10_keyword_matches.csv"

df_final.to_csv(EXPORT_OUTPUT_PATH, index=False)

print(f"Done! Created a list of {len(df_final)} potential matches.")